For the “Impact Guardian”—the development director balancing mission-critical relationships with crushing administrative volume—generative AI initially appeared as a savior. The promise was seductive: grant proposals written in seconds, donor stewardship emails drafted instantly, and hours of time reclaimed for genuine connection.

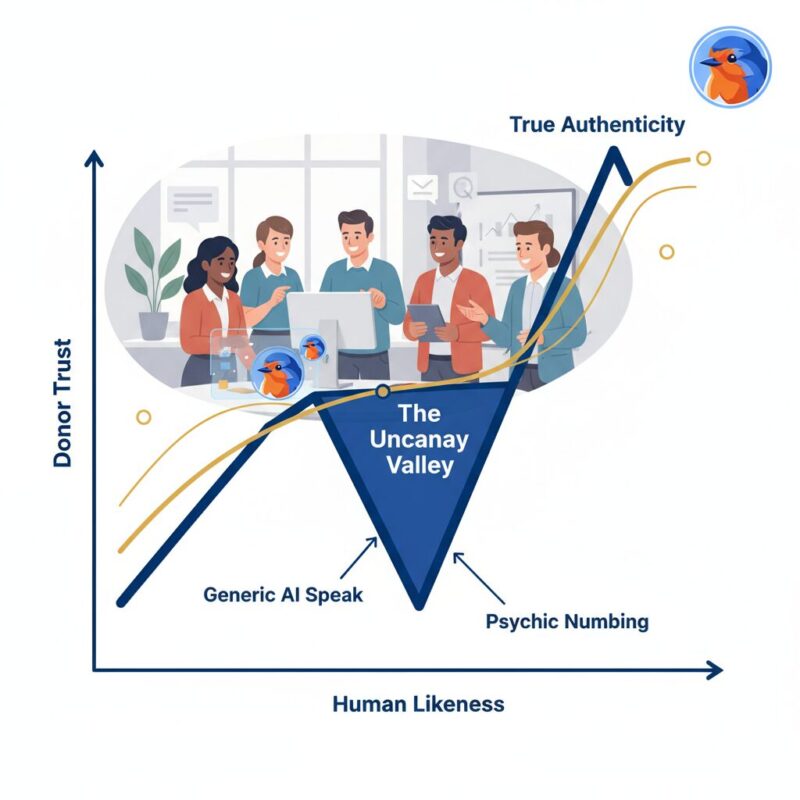

However, as of January 2025, a new crisis has emerged in the nonprofit sector: the “Uncanny Valley” of donor communications. Major donors are beginning to recoil from emails that feel technically perfect but emotionally hollow, saturated with words like “delve,” “tapestry,” and “unwavering commitment.” This phenomenon, known as “AI Speak,” threatens to erode the trust capital that organizations have spent decades building.

The solution is not to abandon AI, but to master it. By shifting from passive prompting to active “Narrative Sovereignty,” nonprofits can use tools like FundRobin to handle the heavy lifting while humans retain the heart.

TL;DR: Nonprofits can defeat “AI Speak” and preserve donor trust by adopting the “Sandwich Method”—a workflow where human strategy frames the request, AI drafts the content, and human editors polish the emotional nuance. To avoid robotic empathy, organizations must purge tell-tale AI words (e.g., “delve,” “tapestry”) and build proprietary “Knowledge Libraries” using past successful grants rather than relying on generic public models. Tools like FundRobin enable this “Grounded AI” approach, ensuring data privacy and narrative integrity while delivering 80% operational efficiency.

The ‘Uncanny Valley’ of Donor Stewardship: Diagnosing ‘AI Speak’

The “Uncanny Valley” is a concept originally from robotics, describing the revulsion humans feel when an artificial simulation looks almost human but is slightly, perceptibly off. In 2025, this valley has migrated to text. When a long-time donor receives a stewardship email that is grammatically flawless yet lacks the specific, messy warmth of genuine gratitude, they experience a form of “psychic numbing.”

This numbing is not just a vague feeling; it is a measurable psychological response to “Robotic Empathy.”

AI models are trained to predict the next plausible word, not to feel. Consequently, they default to high-probability, low-risk language—generic platitudes about “transformative impact” and “rich tapestries of community.”

According to the Stanford Social Media Lab, linguistic markers of AI-generated text often include a distinct lack of variance in sentence structure and an over-reliance on connecting words that mimic logic but lack substance. For a donor, reading this is akin to receiving a form letter from a close friend; the breach of social contract is immediate and damaging.

Furthermore, Grammarly notes that AI-generated text often sounds “polished but stiff,” lacking the idiomatic idiosyncrasies that signal human authorship. In high-stakes fundraising, where six-figure gifts are built on personal relationships, this stiffness is not a stylistic flaw—it is a strategic liability. The efficiency gained by automating the draft is lost entirely if the output alienates the recipient. This is often exacerbated by lexical blindness, where teams fail to see the linguistic patterns that distance them from their audience.

The ‘Sandwich Method’: A Human-in-the-Loop Workflow

To navigate this valley without retreating to manual drudgery, smart fundraising teams are adopting the “Sandwich Method.” This “Human-in-the-Loop” (HITL) workflow acknowledges that while AI is an excellent engine, it makes a terrible steering wheel.

The Sandwich Method consists of three distinct phases:

Phase 1: The Top Bun (Human Strategy)

Before a single prompt is entered, the human fundraiser must define the “Narrative Sovereignty.” This involves establishing the emotional hook, the specific context of the donor relationship, and the strategic objective of the communication. You are not asking the AI to “write a grant”; you are asking it to execute a specific argument you have already designed.

Phase 2: The Meat (AI Execution)

This is where the heavy lifting occurs. Using specialized tools, you generate the first draft. The AI handles the structural compliance, the formatting, and the synthesis of data. In this phase, a tool like FundRobin creates the bulk of the content—roughly 80% of the word count—ensuring that all grant requirements are met and the logic flows linearly. As detailed in the Ethics of Grant Automation, this phase allows development directors to escape the “blank page paralysis” that often bottlenecks grant pipelines.

Phase 3: The Bottom Bun (Human Polish)

The final phase is the “Emotional Injection” pass. The human editor returns to “de-robot” the text (see the protocol below). This involves injecting specific anecdotes, ensuring the tone matches the organization’s unique voice, and verifying that the gratitude expressed feels earned and specific. This phase transforms a “submission” into a “story.”

Comparing a raw AI draft to a “Sandwiched” draft often reveals the difference between a rejection and an award. The raw draft might technically answer the prompt, but the polished version connects the data to a human heartbeat.

Building Your ‘Knowledge Library’: Beyond Generic LLMs

A major cause of generic “AI Speak” is generic input. If you paste a grant question into a public model like ChatGPT, it draws from the entire internet—a “knowledge soup” of average content. To achieve Narrative Sovereignty, nonprofits must move from simple Prompt Engineering to Context Engineering.

Context Engineering involves curating a proprietary “Knowledge Library.” This is a secure repository of your organization’s best work: successful past grant proposals, impact reports, and donor letters that perfectly capture your voice. When you train an AI on your data, it stops sounding like a robot and starts sounding like your best writer on their best day. This is a critical component of strategic AI implementation.

According to the Twilio Nonprofit Digital Engagement Report, personalized, data-driven engagement is the primary driver of donor retention in digital channels. By feeding the AI specific success stories and impact metrics from your library, you ensure the output is rich with the specific evidence that generic models lack.

This is a key differentiator for platforms like FundRobin. Unlike open web models, FundRobin’s “Smart Matching” and proposal generation engines are designed to utilize specific sector data (UK/EU/Global) and your private library. This creates a “Private Knowledge Loop” where your data remains yours, contrasting sharply with public models that may use your inputs to train their general systems—a critical distinction for data privacy.

The ‘De-Roboting’ Protocol: Tactical Frameworks for Authenticity

Even with a strong Knowledge Library, AI models have stubborn habits. To finalize your content, implement this tactical “De-Roboting Protocol.”

Consider this your editorial checklist before hitting “send” or “submit.”

1. The ‘Banned Words’ List (Ctrl+F Search)

Certain words have become hallmarks of AI generation due to their overuse in training data. Scan your drafts for these terms and replace them with specific, active language:

- Delve: Replace with “investigate,” “analyze,” or “explore.”

- Tapestry: Replace with “network,” “community,” or “system.”

- Landscape: Replace with “sector,” “field,” or “environment.”

- Testament: Replace with “proof,” “evidence,” or “result.”

- Unwavering: Replace with “consistent,” “dedicated,” or “steady.”

2. The ‘Gratitude Prompt’

When generating donor communications, force the AI to prioritize the donor’s agency. instead of prompting “Write a thank you letter,” use:

“Write a thank you note that focuses entirely on the donor’s action, not our organization’s size. Use specific impact metrics (e.g., ‘your $50 provided X’). Avoid praising the organization; praise the partner.”

3. Fact-Checking & Anti-Hallucination

As noted by Inside Higher Ed, one of the surest ways to distinguish AI writing is the presence of “hallucinated” facts or vague generalizations. The De-Roboting protocol requires a “Fact Audit.” Every claim of impact must be traced back to a source document. If the AI says “we have significantly improved literacy,” the human editor must change it to “we improved literacy rates by 14% in 2024.”

FundRobin’s Solution: ‘Grounded AI’ and Data Privacy

The ultimate defense against “AI Speak” and hallucination is choosing the right infrastructure. This is where “Grounded AI” becomes the industry standard for the nonprofit sector.

“Grounded AI” refers to systems that are anchored in verifiable facts and specific documents rather than just statistical probability. FundRobin’s Anti-Hallucination Engine cites its sources, referencing specific UK/Global funding standards and your uploaded documents to ensure every proposal is fact-based. This is especially useful for AI funding strategies for small nonprofits looking to compete with larger institutions.

More importantly, data sovereignty is a non-negotiable for the ethical fundraiser. As outlined in the AI Playbook 2025, user data processed through FundRobin is never used to train public models. This preserves your organization’s unique intellectual property. You get the speed of automation without the risk of leaking your donor strategy to the open web or your competitors.

By using a tool designed for compliance—checking against GDPR and Charity Commission rules automatically—you free up mental bandwidth. The goal of the Robin AI Assistant is not to replace the fundraiser, but to extend their runway, allowing them to focus on the high-value relationship building that no machine can replicate.

Frequently Asked Questions

What is the Sandwich Method for AI grant writing?

The Sandwich Method is a 3-step “Human-in-the-Loop” workflow designed to maintain narrative integrity. It starts with Phase 1 (The Top Bun), where a human defines the strategy and emotional hook. Phase 2 (The Meat) involves the AI generating the draft and handling structural compliance. Finally, Phase 3 (The Bottom Bun) sees the human editor polishing the text for voice, emotional nuance, and specific impact data.

What are common words that indicate AI-generated text?

Words like “delve,” “tapestry,” “testament,” “landscape,” and “unwavering commitment” are common linguistic markers of AI-generated text. According to Grammarly, AI relies on these high-probability connecting words which often result in polished but stiff prose. A lack of specific data points and an over-reliance on these generic terms are red flags for donors.

Is it ethical to use AI for writing grant proposals?

Yes, provided you maintain a “Human-in-the-Loop” (HITL) approach where humans verify accuracy and retain narrative sovereignty. As discussed in FundRobin’s Ethics of Grant Automation, using AI to handle the administrative burden of drafting is ethical, but submitting unverified AI content or using it to deceive donors about your capabilities is not.

Why does AI-generated empathy often fail with donors?

AI-generated empathy often fails because it produces “Robotic Empathy”—emotional language that mimics connection without genuine feeling, leading to “psychic numbing” in donors. Stanford Social Media Lab research suggests that humans are highly attuned to linguistic markers of authenticity, and generic, high-volume AI content can trigger a feeling of revulsion or distrust known as the “Uncanny Valley.”

How does FundRobin prevent AI hallucinations in proposals?

FundRobin utilizes “Grounded AI,” which anchors all generated content in verifiable sources and your specific “Knowledge Library.” Unlike generic LLMs that may fabricate facts to complete a pattern, FundRobin cites its sources (such as UK/Global funding standards) and does not use your private data to train public models, ensuring both accuracy and privacy.

Key Takeaways:

- Adopt the ‘Sandwich Method’: Implement the Human Strategy → AI Drafting → Human Polish workflow to save 80% of drafting time while maintaining narrative integrity.

- Purge ‘AI Speak’: Immediately remove words like ‘delve’, ‘tapestry’, and ‘unwavering’ to prevent ‘psychic numbing’ among major donors.

- Build a ‘Knowledge Library’: Stop using generic zero-shot prompts; train your AI sessions on your past successful grants to replicate your unique voice and win rates.

- Leverage ‘Grounded AI’: Use platforms like FundRobin that cite sources and protect your IP, ensuring compliance with GDPR and preventing data leakage to public models.

- Focus on ROI: By automating the ‘drudgery’ of drafting, development directors can reclaim 15-20 hours per week for high-value donor stewardship—the only activity that guarantees long-term revenue growth.

Conclusion: Reclaiming Narrative Sovereignty

The future of fundraising is not automated; it is augmented. As we move toward 2026, the sector is adopting a “High Tech, High Touch” model where AI handles the data processing and drafting, allowing humans to handle the relationships.

Narrative Sovereignty means owning your story, regardless of who—or what—holds the pen for the first draft. It means refusing to let efficiency compromise authenticity. By adopting the Sandwich Method and utilizing grounded tools like FundRobin, you can escape the Uncanny Valley.

As noted by The Chronicle of Philanthropy, the tools of the future will serve as partners, not replacements. The “Impact Guardian” of tomorrow is one who treats AI not as a writer, but as a robotic research assistant—one that requires clear direction, strict editing, and a human heart to truly succeed.

Leave a Reply