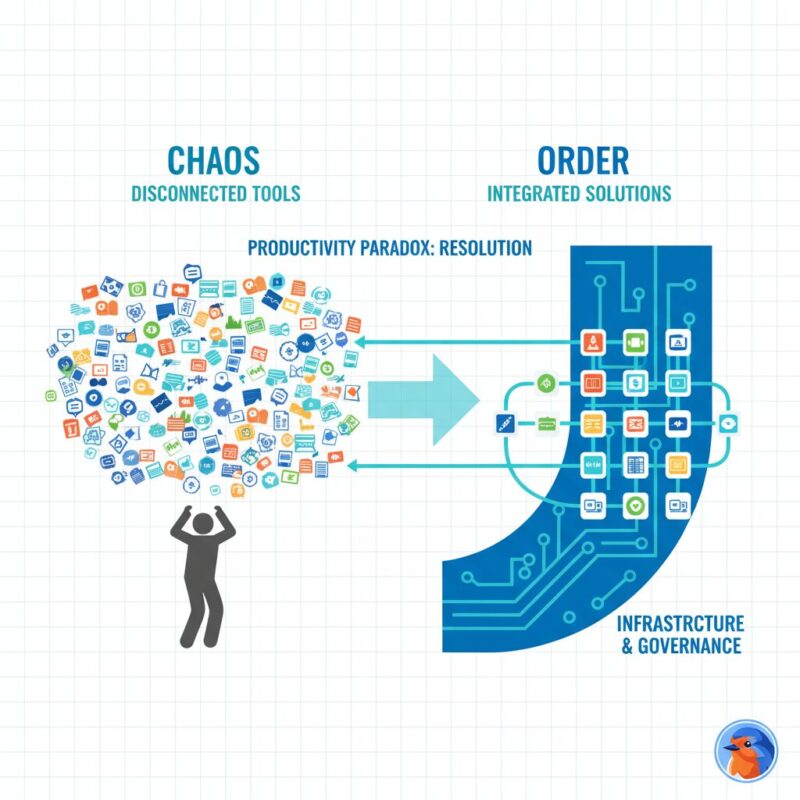

The promise of Artificial Intelligence was supposed to be liberation. For nonprofit leaders, the pitch was simple: automate the drudgery, liberate your staff, and focus entirely on the mission. Yet, as of January 2025, the reality on the ground feels starkly different. Instead of clarity, many Executive Directors and COOs are experiencing a profound “Productivity Paradox”—a state where the rapid adoption of disconnected tools has increased administrative debt rather than reducing it.

If you feel like you are working harder to manage the technology than the technology is working for you, you are not alone. The friction you are experiencing is not a failure of adoption; it is a failure of infrastructure. We are entering a new phase of digital maturity. The “Wild West” era of ad-hoc experimentation is closing. In its place, a new standard is emerging: The 2026 Infrastructure Mandate. This is the shift from asking “What tools should we use?” to “How do we govern what we have?”

TL;DR: The “Infrastructure-First” approach prioritizes governance and policy over tool adoption to prevent staff burnout and secure future funding. By auditing workflows, implementing “Grounded AI” solutions like FundRobin, and establishing a formal AI Use Policy, nonprofits can mitigate legal risks and align automation with their mission. As of 2025, this strategy is the primary safeguard against the “Productivity Paradox” and increasing funder scrutiny.

Table of Contents

- The ‘Productivity Paradox’ & AI Fatigue: Why Tools Failed You

- The 2026 Funding Frontier: Navigating the ‘AI Arms Race’

- Governance as Strategy: The ‘Infrastructure-First’ Roadmap

- Precision Fundraising: Balancing Automation with Donor Trust

- The ‘Infrastructure-First’ Tech Stack: Selecting Safe Tools

- Leading Through Change: Bridging the Expertise Gap

- Frequently Asked Questions

Future-Proof Your Charity: Strategic AI Implementation & Governance

The ‘Productivity Paradox’ & AI Fatigue: Why Tools Failed You

The initial wave of AI adoption in the nonprofit sector was driven by anxiety—the fear of being left behind. This led to a “tool-first” mentality where organizations subscribed to generative apps before defining the problems they were solving. The result is a workforce suffering from acute technological exhaustion.

According to Psychology Today, “AI fatigue” is a growing psychological phenomenon characterized by the cognitive load of constantly relearning workflows. For nonprofit staff, who are already prone to mission-driven burnout, this added layer of complexity can be paralyzing. The paradox is that by adding efficiency tools without a governance layer, leaders have inadvertently created more work—more passwords to manage, more outputs to fact-check, and more ethical grey areas to navigate.

The root cause of this fatigue is the “10% Policy” liability. Industry data suggests that while nearly all nonprofits are experimenting with AI, fewer than 10% have a formal, board-approved usage policy. This gap creates a vacuum where staff are forced to make individual ethical decisions daily, adding to their mental load. Moving from this ad-hoc chaos to an “AI-Native” infrastructure requires stopping the influx of new tools and stabilizing the foundation you currently have to solve the nonprofit efficiency crisis.

The 2026 Funding Frontier: Navigating the ‘AI Arms Race’

The pressure isn’t just internal; it is increasingly external. The grantmaking landscape is undergoing a silent revolution. We are currently witnessing an “AI Arms Race” between grant writers equipped with generative tools and grantmakers deploying automated screening algorithms.

However, the dynamic is shifting. Funders are no longer just looking for the right keywords; they are scrutinizing the methodology of the application. According to insights from Bridgespan, the social sector is pivoting toward rigorous evaluation of how technology is applied. In 2026, “Shadow AI”—the undisclosed use of generic Large Language Models (LLMs) to generate proposals—will move from a grey area to a liability.

Grantmakers are beginning to rewrite eligibility criteria to penalize “lazy” generation while rewarding strategic, transparent adoption.

This creates a dangerous trap for nonprofits using public, hallucination-prone models. The solution is “Grounded AI”—technology that anchors generative capabilities in verified data. Tools that offer Smart Proposal Generation functionality, like FundRobin, are designed specifically for this compliance-heavy environment. Unlike generic chatbots, these systems prioritize citation and factual accuracy, ensuring that your narrative remains authentic to your mission while satisfying the increasing demand for data sovereignty in the application process.

Governance as Strategy: The ‘Infrastructure-First’ Roadmap

Governance is often viewed as a bureaucratic hurdle, but in the context of AI, it is your ultimate strategic shield.

To move from anxiety to action, follow this infrastructure-first roadmap:

Step 1: The Mission-Alignment Audit

Before drafting a single clause, conduct an audit to decide what you will not automate. Determine which donor touchpoints require human empathy and which administrative burdens are ripe for automation. This boundary setting is critical for maintaining the human-in-the-loop.

Step 2: Drafting the ‘AI Use Policy’

Your policy does not need to be a 50-page legal document, but it must address 501(c)(3) specific risks. According to Whole Whale’s 2025 Policy Analysis, essential clauses must cover data privacy, prohibited uses (e.g., entering donor PII into public models), and attribution requirements.

Step 3: The ‘Human-in-the-Loop’ Protocol

Define the review process. No AI-generated output—whether a grant report or a donor email—should leave the organization without human verification. This protocol is your insurance policy against hallucinations.

Step 4: Board Approval

Boards are risk-averse by design. When presenting your strategy, frame governance as risk mitigation. Use the Nonprofit Governance Policy Template from Fundraising.AI to demonstrate alignment with industry standards.

For a deeper dive into aligning your funding strategy with these governance principles, review our Nonprofit AI Funding Guide 2025.

Precision Fundraising: Balancing Automation with Donor Trust

A common fear among nonprofit leaders is that AI will “depersonalize” fundraising, turning relationships into transactions. This fear stems from a misunderstanding of the technology’s best use case. The goal is not to automate the relationship; it is to automate the research so you can focus on the relationship.

This is the concept of “Precision Stewardship.” Instead of sending thousands of generic appeals, use AI to analyze donor signals and identify the few dozen supporters who are ready to engage. FundRobin’s Smart Grant Matching exemplifies this approach by filtering funding opportunities based on nuanced mission alignment, saving development directors hundreds of hours of research time. This is especially vital when developing strategies for small nonprofits with limited resources.

Transparency as a Trust Builder

The antidote to donor skepticism is radical transparency. When you use AI, be open about it. Informing major donors that you utilize advanced data analytics to maximize the impact of their dollar positions your organization as a forward-thinking steward of resources. It signals efficiency, not impersonality.

Ethical boundaries are paramount here. Generative AI should assist in drafting the structure of an appeal, but the emotional core—the story of impact—must remain human. For more on this balance, see our AI Nonprofit Funding Strategic Guide.

The ‘Infrastructure-First’ Tech Stack: Selecting Safe Tools

Selecting the right tools is no longer about features; it is about security architecture. In the “Infrastructure-First” mandate, data privacy is the non-negotiable gatekeeper.

Leaders must be wary of “shiny object syndrome.” Many low-cost AI tools operate on a business model that monetizes user data to train their models. For a nonprofit handling sensitive beneficiary data or donor lists, this is a compliance nightmare waiting to happen.

The Compliance-First Checklist:

- Data Sovereignty: Does the vendor claim ownership of your data?

- No-Training Guarantee: Does the vendor explicitly state they will not use your data to train their public models?

- Encryption Standards: Is data encrypted both at rest and in transit?

According to Databricks’ 2025 Strategy Guide, organizations must prioritize platforms that offer isolated environments. This is the core philosophy behind FundRobin’s Security Architecture: providing enterprise-grade security where nonprofit data is utilized strictly for the organization’s benefit, never for public model training. Investing in secure infrastructure now prevents costly data breaches and reputational damage later.

Leading Through Change: Bridging the Expertise Gap

The final, and perhaps most challenging, component of the 2026 mandate is cultural. You cannot govern AI if your staff fears it. The “Expertise Gap”—the belief that one must be a coder to use these tools—creates resistance. Organizations can overcome this by leveraging semantic funding discovery tools that prioritize user-friendly interfaces over technical complexity.

Leadership’s role is to dismantle this fear by reframing AI as “augmentation,” not “replacement.” This requires creating an environment of psychological safety. Staff must feel secure enough to admit what they don’t know and experiment with new workflows without fear of obsolescence.

The ‘AI Champion’ Model

Rather than hiring expensive external consultants, identify internal “AI Champions”—staff members who show natural curiosity. Empower them to test safe tools and report back. This bottom-up approach to innovation, guided by top-down governance, bridges the gap between strategy and execution.

Frequently Asked Questions

What is the ‘Productivity Paradox’ in nonprofit AI adoption?

The Productivity Paradox is the phenomenon where introducing AI tools initially increases workload and anxiety due to a lack of strategy. Instead of saving time, staff spend hours managing disparate tools, learning new interfaces, and correcting errors. The solution is slowing down adoption to build a governance framework before adding more software.

How does using AI impact nonprofit grant eligibility and compliance?

While AI adoption is encouraged, “Shadow AI” (undisclosed usage) is increasingly penalized by funders. As of 2025, many grantmakers are moving toward requiring AI disclosure statements. Using compliant tools ensures you meet these evolving criteria without jeopardizing eligibility.

Is using AI for grant writing considered cheating or unethical?

No, provided it is used for “assisted” drafting rather than deceptive generation. Using compliant tools like FundRobin, which rely on “Grounded AI” to ensure facts are accurate and cited, is a strategic asset. Blindly copying output from generic chatbots without verification, however, carries significant reputational risk.

What is the first step in creating a nonprofit AI governance policy?

The first step is conducting a Mission-Alignment Audit to identify high-risk versus high-reward use cases. Immediately following this, draft a preliminary “Acceptable Use” one-pager and designate an AI responsible officer—even if it is a part-time role—to oversee compliance.

How do we communicate our use of AI to major donors without losing trust?

Adopt a “Transparency-First” approach, informing donors that AI handles backend data analysis to free up staff for personal interaction. Frame the technology as a tool for “Precision Stewardship” that ensures more of their donation goes to impact rather than administrative overhead.

What are the data privacy risks of using free AI tools for fundraising?

“Open” models (like the free tier of ChatGPT) often reserve the right to use your input data to train their systems, posing a massive privacy risk for donor data. “Enterprise” or private models (like FundRobin) encrypt data and guarantee no training usage, ensuring compliance with GDPR and donor privacy standards.

How can leaders manage staff burnout and resistance to AI adoption?

Manage resistance by prioritizing psychological safety and framing AI as a tool for augmentation, not replacement. Validate their burnout from the “Productivity Paradox” and involve staff directly in the policy creation process to give them a sense of control and ownership over the transition.

Key Takeaways:

- The ‘10% Policy’ Liability: 90% of nonprofits lack a formal AI policy, exposing them to legal and reputational risk. Immediate governance documentation is the highest-ROI activity for 2025.

- The ‘Productivity Paradox’ is Real: Adopting tools without a strategy increases burnout. Shift focus from “doing more” to “building infrastructure.”

- Grant Compliance is Tightening: Funders are scrutinizing “Shadow AI.” Use “Grounded AI” tools like FundRobin that prioritize citations and compliance over generic generation.

- Mission-Aligned Automation: Conduct an audit to determine which workflows must remain human (donor empathy) and which are ripe for AI (grant discovery).

- Data Privacy is Non-Negotiable: Never feed donor data into public models. Invest in enterprise-grade tools with “No-Training” guarantees to protect your organization.

- Transparency Wins Trust: Proactively communicating your AI use to donors and boards builds credibility and positions your nonprofit as a forward-thinking steward of resources.

Conclusion

The 2026 Infrastructure Mandate is not a call to buy more software; it is a call to mature your operations. The nonprofits that thrive in the coming years will not be the ones with the most tools, but the ones with the strongest governance. By prioritizing policy, security, and human-centric strategy today, you are not just solving the burnout of the present—you are securing the mission for the future. Start smart, govern wisely, and let your infrastructure be the catalyst for your impact.

The 2026 Nonprofit AI Technology Stack: What to Implement First

Building a governance-compliant AI technology stack requires a phased approach that balances capability with risk management. Based on adoption patterns across hundreds of nonprofits, the following implementation sequence minimises disruption while maximising early wins:

Phase 1: Grant Discovery and Matching (Weeks 1-4)

AI-powered grant discovery represents the lowest-risk, highest-reward entry point for nonprofit AI adoption. These platforms analyse your organisational mission, programmes, and eligibility criteria against thousands of active funding opportunities, surfacing the most relevant matches automatically. Unlike traditional database searches that rely on keyword matching, modern AI grant matching uses semantic understanding to identify opportunities you might otherwise miss due to terminology differences between your language and the funder’s. Implementation requires minimal change management since grant research is typically already a staff responsibility. The AI simply does it faster and more comprehensively, freeing fundraising staff to focus on relationship building and proposal development.

Phase 2: Proposal Drafting and Review (Weeks 4-8)

Once grant discovery is operational, the next layer adds AI-assisted proposal writing. This is where governance protocols become critical. Organisations must establish clear policies about how AI-generated content is reviewed, who approves it, and how the organisation’s authentic voice is preserved. The “60/40 human-in-the-loop” model, where AI handles roughly 60% of initial drafting while humans retain 40% control over strategy, voice, and final review, has emerged as the gold standard for maintaining both efficiency and integrity. Funders increasingly expect applicants to disclose AI usage, making transparent governance a compliance requirement rather than an optional best practice.

Phase 3: Post-Award Management and Compliance (Weeks 8-12)

The most overlooked area of nonprofit AI implementation is post-award grant management. AI-powered monitoring and evaluation tools can automate milestone tracking, generate compliance reports, manage funder communication schedules, and flag potential issues before they become problems. Organisations that implement post-award AI alongside discovery and drafting tools report 35% reduction in administrative burden and significantly improved funder satisfaction scores, which directly improves prospects for renewal funding.

AI Governance in Practice: Building the Framework

Effective AI governance for nonprofits requires more than a policy document filed in a shared drive. It demands an active, living framework that evolves with technology and regulatory requirements. Here is how successful nonprofits in 2026 are operationalising AI governance:

The AI Ethics Committee

Organisations with annual budgets above 500,000 GBP should establish a dedicated AI ethics committee, or add AI oversight to an existing governance sub-committee’s mandate. This body reviews all new AI tool adoptions, monitors ongoing usage against the approved policy, and provides a clear escalation path for staff who identify concerns. The committee should include at least one trustee, one operational staff member who uses AI daily, and ideally one external advisor with technology governance expertise.

Data Protection and AI: The GDPR Intersection

UK nonprofits must navigate the intersection of AI tools and GDPR compliance with particular care. Any AI platform that processes beneficiary data, donor information, or staff records must be assessed against your Data Protection Impact Assessment (DPIA) framework. Key questions include: Where is data stored and processed? Is data used to train third-party models? How is data retention managed? Can individuals exercise their right to erasure? Organisations that address these questions proactively in their AI governance framework avoid costly compliance remediation and build trust with stakeholders.

Measuring AI ROI for Nonprofit Leaders

Board members and senior leaders need quantifiable metrics to evaluate AI investments. The most meaningful KPIs for nonprofit AI governance reporting include: time saved on grant research and proposal writing (measured in staff hours per month), application-to-award ratio improvement (comparing pre-AI and post-AI success rates), cost per successful grant application (factoring in platform subscriptions against staff time saved), and compliance incident rate (tracking whether AI tools reduce or introduce governance risks). Reporting these metrics quarterly gives trustees the evidence base they need to make informed decisions about continued AI investment and expansion.

Recommended Reading

- Charity Trustee’s Guide to Ethical AI Governance in 2026 — Practical governance framework for trustees overseeing AI adoption in UK charities.

- Defeating AI Speak in Nonprofit Fundraising — How to maintain authentic organisational voice when using AI tools for grants and donor communications.

- Post-Award Grant Management with AI — Transform monitoring and evaluation workflows with AI-powered compliance and reporting tools.

- What Is AI Grant Matching? A Strategic Guide — Deep dive into how AI matching algorithms connect nonprofits with the most relevant funding opportunities.

- How Nonprofits Use AI to Write Grants Faster in 2026 — Practical implementation guide for integrating AI into your grant writing workflow.

Comments

3 responses to “Strategic AI Implementation & Governance for Nonprofit Leaders: The 2026 Infrastructure Mandate”

[…] Context Engineering involves curating a proprietary “Knowledge Library.” This is a secure repository of your organization’s best work: successful past grant proposals, impact reports, and donor letters that perfectly capture your voice. When you train an AI on your data, it stops sounding like a robot and starts sounding like your best writer on their best day. This is a critical component of strategic AI implementation. […]

[…] According to the ICAEW, the new SORP will maintain a degree of proportionality, but convergence with IFRS is undeniable. Smaller charities should prepare for Tier 2 standards as a best practice for future-proofing and strategic AI implementation. […]

[…] to evaluate AI grant software. Marketing claims from software vendors must be tested against strict institutional compliance mandates, particularly regarding IP protection, data security, and agency-specific guideline […]